I get where you are coming from, but since you've not said what you are using that forces these artificial limitations then it's hard to fully understand.

It shouldn't be hard to understand. I need more lanes, without compromise, than is available from the current 'enthusiast' AM4 platform. I've said this more than once.

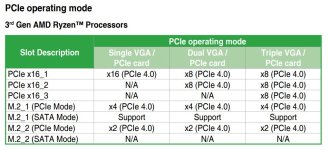

In reality it doesn't matter what i'm using and it's not me forcing the artificial limitations....the motherboards are. The PCIe bandwidths are not a substitute for lanes in all circumstances although people seem set on constantly conflating them. Your SM/enterprise-class example clearly shows that you gained benefit from PCIe4 because it unlocked the bandwidth you required. This isn't my use case. I require lanes and not bandwidth and PCIe4.0 will not change this.

I mentioned to you i have other >x1 cards and the reasons why it is not optimal to use. I'll humour you by letting you know that, among other things, i use a Perc H710 and as i alluded to i compromise my GPU by using it.

I used to use the Asus X99-E-10G WS for a client build/design that called for a huge number of expansion cards, and it wasn't ideal as it utilised PLX chips to get the 7x 16x PCI-E slots, but had dual on-board 10GbE NIC's which was great. Then bam, Threadripper came along and made the 40 lanes native to the CPU on X99 look amateurish. I still struggled as at least two of the 16x PCI-E slots we filled with 4x NVMe -> 16x adapter cards using bifurcation, but still needed 32x lanes for 2 GPU's that pushed the design up to 64x lanes which meant that one of the GPU's had to be run at 8x if we wanted to achieve the full potential of the NVMe drives. With PCI-E 4.0 that is now gone, as the extra bandwidth it offers to the disk subsystem is immense, and we are currently doing a re-design with Samsung 980 Pro's in place, which mean we can drop the number of drives by 50% while keeping the capacity and bandwidth, and allowing us to add a further GPU in to the mix, or a 40Gbps/100Gbps fibre card, depending on where the system will be used.

You had a bandwidth deficit here. I don't. This is an apples/oranges comparison so while it was interesting for me to read, offered nothing to my situation.

Changing the I/O die sounds simple but at the launch of Zen2 the I/O die's are fabbed on 12/14nm so adding more PCI-E lanes would add quite a chunk of extra space which may have limited the availability of keeping the dual die options for the CPU's, and also added extra overhead for the power draw if running the I/O flat out.

AMD designed a new IO chip, of course they could have added another 8 lanes while they were at it. Unless you have first-hand knowledge of what it took to design the IO die and why 8 lanes couldn't be added i'll file this statement under 'speculation'. What still remains is the fact they missed an opportunity to drive differentiation.

Saying they fumbled is a bit harsh given the fact they came from a platform which might have had great boards but the CPU's were terrible, and lets not forget it was PCI-E 2.0 so your argument about the GPU's being held back would stand on that platform too.

Harsh but true. Again, total bandwidth is not a substitute for available lanes (and vice versa). I also didn't lament having 990FXA for AM4, i lamented the flexibility of the large lane allocation. AMD could easily have done that while implementing PCIe3.0. It's a bit of a nonsense to bring PCIe2.0 up as if that's what i wanted.

At this point, PCIe4.0 is not a reason to buy for most people. Should AMD have brought out PCIE4.0 and more lanes than X370/X470 then i'd have bought, even at PCIe3.0. Not for the bandwidth, for the lanes. If AMD had brought out X470 but with an uplift from X370 in PCIe lanes then i'd have bought (where i didn't). See where i'm going with this? I don't think i'm unique in this regard.

so I had lots of 1 and 2TB ones that were cost effective, I would have upgraded to TRX40 if the 3950x was on it but its not so I sit here waiting for something to fill the gap much like I did when I moved from z77, couldn't find a board to run all my gear due to lanes back then as no one was doing PLX anymore after Avago hiked prices and did not want to buy another board that would go out of date no sooner than I started using it, then TR came alone, I haz all the lanes

so I had lots of 1 and 2TB ones that were cost effective, I would have upgraded to TRX40 if the 3950x was on it but its not so I sit here waiting for something to fill the gap much like I did when I moved from z77, couldn't find a board to run all my gear due to lanes back then as no one was doing PLX anymore after Avago hiked prices and did not want to buy another board that would go out of date no sooner than I started using it, then TR came alone, I haz all the lanes